Data science is a powerful tool for any business. You can even hire an interim CIO to elevate data science and machine learning as a core organizational capability for your business. However, in order to apply data science to the business, we have to develop a tool which ordinary users can easily use and gain benefits from looking into the data.

Data visualization tools such as Tableau or PowerBI are great examples of how the data exploration tool can add huge value to the data in any business.

I recently got a chance to develop yet another data exploration tool for stock market data from scratch. Since I had to deal with everything from the server to developing API to developing frontend application, I felt this knowledge and experience are valuable to me and could be helpful to other people looking to develop their data science web applications.

In this blog post, I will walk you through the process and development of building the web application I built a few weeks ago. If you are interested in seeing the code, feel free to let me know via Twitter (@woraperth). I may consider open-sourcing the code on my Github later.

The Stock Analyzer Web Application

This is the web application I built. It is not the most beautiful, but I hope the interface is simple enough for users to understand.

The live version was shut down because it cost me if I wanted to leave the API server open. Instead, I decided to write this blog so I can reuse some parts of this project later.

Why do we need this application?

The analysts would like to analyze the companies in the stock market who could be their potential customers. By looking at each company one by one, it will take a lot of time. They require easy to use tool to help them speed up this process.

The goal of “Stock Analyzer” is to leverage the stock market data and build the simple interface to assist analysts in order to find the right customers.

What is the data we have and where to find them?

The data consisted of the stock price on each day for each stock in each stock market.

The stock market data can be acquired from the finance website such as Yahoo! Finance. It lets you specify the date range and download the CSV file for historical data on each stock.

The Project Architecture: React & NodeJS & MySQL

The most important part is to plan what technologies I required, and how I can link them together. I decided to challenge myself by using NodeJS to build the API and send the data to the React frontend. It was my first time writing NodeJS, and also my first time building a web application in a modern way (Decoupling the backend from the frontend).

I saw many modern websites developed this way, and their websites are very fast. I knew I could struggle to develop it since I have not done this before, but it would benefit me in the long term to learn how it works.

Here is how this application will work behind the scene: (Please follow along with the architecture image above, from left to right & top to bottom)

- The user visits a website and sees the frontend built by React

- When the user requests the data, the frontend will connect with the API endpoints built by NodeJS to request the data

- NodeJS will query the data from the MySQL database, then send the result back to frontend

- MySQL database will load the data from the text file into the database, for every fixed amount of time e.g. daily.

The project preparation & organization: DigitalOcean & Git

Since I planned to decouple the backend from the frontend, I can setup the backend and frontend on the different servers without any problem.

For the backend, I chose to setup cloud server on DigitalOcean (service similar to AWS, but a lot simpler to use). I like DigitalOcean because it is easy to setup, and the support team provided a lot of useful articles on how to install different software on their cloud server.

Here is the list of articles I used to install NodeJS, Nginx, and MySQL:

- NodeJS Installation tutorial (This will also teach you to use PM2, which helps to serve the NodeJS application in the background. So we do not have to keep the Terminal window open): https://www.digitalocean.com/community/tutorials/how-to-set-up-a-node-js-application-for-production-on-ubuntu-16-04

- Nginx Installation tutorial (We need Nginx to do the reverse proxy in order to expose NodeJS port. We have to enable firewall first, then allow SSH. Otherwise, we will be locked out of the server): https://www.digitalocean.com/community/tutorials/how-to-install-nginx-on-ubuntu-16-04

- MySQL Installation tutorial: https://www.digitalocean.com/community/tutorials/how-to-install-mysql-on-ubuntu-16-04

For the Nginx part above, make sure to run this command to allow SSH:

sudo ufw enable sudo ufw allow ssh

For the frontend, I can use any web server that can serve HTML page.

The development process & tech stack

In order to develop this application, I first set up the git repository and server environment by following the tutorials I mentioned above. Then I started developing the API endpoints. After testing the API, I developed the frontend application to connect to the API.

The technologies I used are MySQL for the database, NodeJS for the backend API, and React for the frontend. There are reasons behind selecting these technologies:

Why MySQL

- Because the stock data is in a structured data file (CSV), it will sit comfortably inside a relational database such as MySQL

- MySQL is open-sourced, popular (= huge community), and support standard SQL commands. It will be easy for us to switch to any database software that supports SQL

- By using ‘mysql’ module in NodeJS, the inputs can be automatically escaped to prevent SQL injection

Why NodeJS

- Another popular programming language to develop backend. Used in the big companies such as LinkedIn and eBay

- Fast to run and support parallel queries

- Simple to build API using Express

- Use JavaScript syntax, which makes the code and knowledge shareable with the frontend

- Most importantly, I haven’t used it before and would like to learn how to use it

Why React

- Easy to maintain, extend, reuse components on the web page

- Huge library of third-party components, which can be plug-and-play

- Good user experience since it is extremely fast

- JSX is AWESOMEEEEEE

The Data Pipeline: Scheduling Data Loading with NodeJS

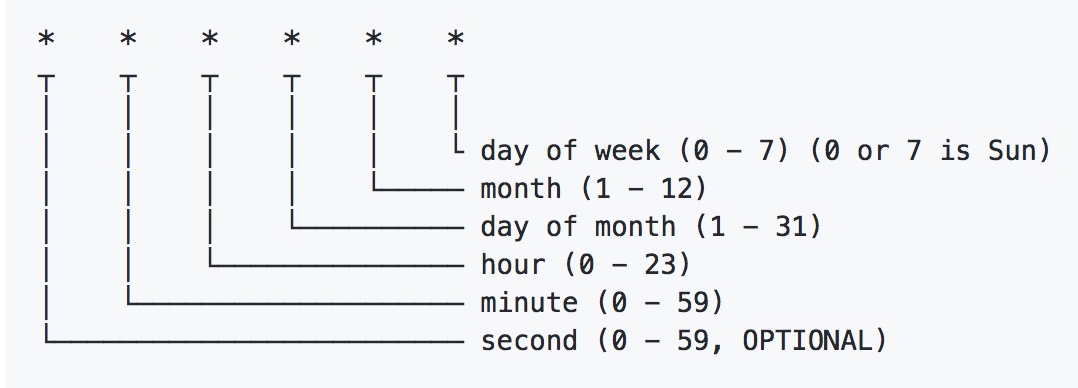

I used the NodeJS package node-scheduler to schedule loading data into MySQL database every 1 AM. The syntax is the same as setting up cronjob. Therefore, we can use the online tool to help writing the cron such as crontab.

Note that I am a newbie in data engineering area. Therefore, this might not be the most effective way to schedule the task. I also found that loading the data through NodeJS requires more RAM than loading data directly with MySQL command line.

The Logging with NodeJS

Logging is very useful especially when we have the process running in the background e.g. data loading. We can always open the log file to see if something went wrong while we were sleeping.

I used the NodeJS package winston to manage the log. It is quite convenience that winston allowed me to log error seperated from the warning & info.

Here are 2 log files in this project:

- logs/error.log – SQL Error

- logs/combined.log – Warning & Info

The Extra Feature: Stock Comparison

The first version of Stock Analyzer can see one stock’s performance at a time:

I felt that it was quite dry to show only one stock at a time. It is also not giving much value to the analysts who will be using this tool.

Recently, I read the very good book called “Outside Insight” which talked about how can we leverage the power of external data. There was one topic about benchmarking that interest me.

“The data point only gains value if you can see it in comparison to other data points and build a relation between them. I do not believe that an organization can exist without benchmarking.”

– Jan-Patrick Cap, quoted in Outside Insight (2017)

I decided to develop the application further to add an ability to compare multiple stocks. This way the analysts can see how the companies are ACTUALLY performing in the market. In some cases, we may found that the company with really good performance could be very small gain when comparing to other companies.

What I learned from this project

This is a great project and I learned to use new technologies and a new way to link different technologies together. Compare to the traditional website with CMS backend, I found that React with NodeJS API is very fast at a small cost (I paid $5/month for the cloud). However, it could be slow when there is a large traffic.

In the future, I could improve the data engineering process to be up to the industry standard. Because I had no experience in this area before, I am interested to learn more about data engineering. It is also difficult to find the practical guide. If you know a good place to study this, please feel free to share 🙂

I also would like to explore other database software to see which one gives the best performance. I was thinking about using Postgres, or NoSQL such as MongoDB which is becoming popular, or multicloud data storage such as BigQuery might be a good candidate as well since this application is more of OLAP than OLTP.

I hope this blog will be useful for people who are looking for a way to develop data science web application. Feel free to ping me on Twitter @woraperth for any question.

Appendix

Here is where I note the useful stuff I learned from this project. It will come in handy if you would like to develop the project using the same technology stack.

Frequent Problems

- Error ‘listen EADDRINUSE 8080’ after running ‘npm start’

Run ‘killall -9 node’ usually fix the problem. Read more on StackOverflow. - Require to wait for many tasks to be done before doing the next part

We can use Promise to handle Async. I wrote a blog with sample code long ago, yet it still works perfectly (except we don’t need polyfill anymore in 2018) - Not enough RAM when trying to load the data via NodeJS API endpoint

If the browser tab dies, it is fine since this is due to the browser timeout. If the node dies, increase droplet’s RAM to 2GB or 4GB and try again. - The server time is not in Melbourne time

Run this command to set timezone: sudo dpkg-reconfigure tzdata

Check the current server time: timedatectl - How to check if MySQL is running

Run this command to check MySQL status: systemctl status mysql.service

If MySQL is not running, run this command to start MySQL: sudo systemctl start mysql

Leave a Reply